Projects

- A learned feature detector and descriptor for bursts of images

- Noise-tolerant features outperform state of the art in low light

- Enables 3D reconstruction from drone imagery in millilux conditions

- RectConv adapts existing pretrained CNNs to work with fisheye images

- Requires no additional data or training

- Operates directly on the native fisheye image as captured from the camera

- Works with multiple network architectures and tasks

- 3D change detection with Gaussian splatting.

- Label-free and robust to viewpoint changes between trajectories.

- New dataset of ten challenging scenes with structural and surface changes.

- Lightweight pipeline for event and RGB camera-based automated satellite docking

- We introduce a photometrically accurate physical orbital simulator

- We show advantages of mixing data-driven and geometric models

- We validate event cameras enable docking in a broad range of practical conditions

- Find the preprint here, more details coming soon at

the project page.

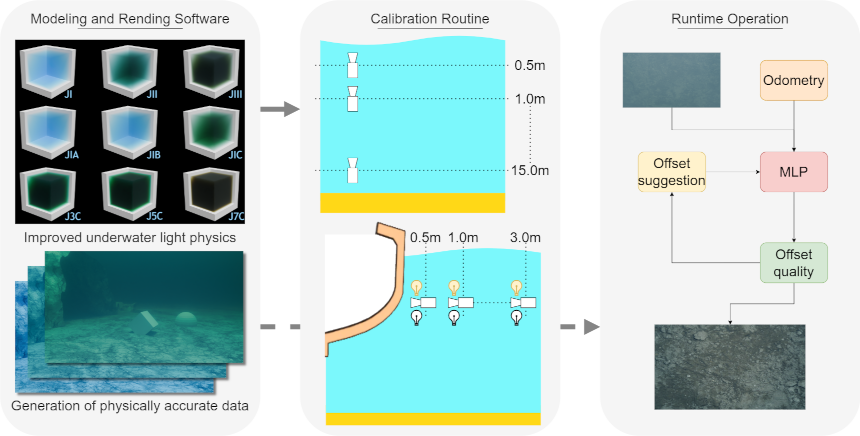

- We increase the autonomy capabilities of underwater robotic platforms.

- We improve simulation of underwater imagery within the Blender modeling software, including more accurate light behaviour in water, and models of the oceans.

- We introduce a method for in-situ water column property estimation using a monocular camera and adjustable illumination.

- We design a framework providing online guidance suggestions to maintain high-quality data collection and maximise visual coverage in a broad range of water conditions.

- Surface light field inspired regularisation to improve geometric fidelity of NeRF-based representations

- We propose a second sampling of the representation to regularise local appearance and geometry at surfaces in the scene

- Applicable to future NeRF based models leveraging reflection parameterisation

- An effective light field segmentation method

- Combines epipolar constraints with the rich semantics learned by the pretrained SAM 2 foundation model for cross-view mask matching

- Produces results of the same quality as the Segment Anything 2 (SAM 2) video tracking baseline, while being 7 times faster

- Can be inferenced in real-time for autonomous driving problems such as object pose tracking

- An end-to-end camera design method that co-designs cameras with perception tasks

- We combine derivative-free and gradient-based optimizers and support continuous, discrete, and categorical parameters

- A camera simulation including virtual environments and a physics-based noise model

- Key step in simplifying the process of designing cameras for robots

- Robotic vision without capturing images or allowing image reconstruction

- We propose guidelines and demonstrate localisation in simulation

- Call to action for advancing inherently private vision systems

- Try the reconstruction challenge!

- We introduce burst feature finder, a 2D + time feature detector and descriptor for 3D reconstruction

- Finding features with well defined scale and apparent motion within a burst of frames

- Approximate apparent feature motion under typical robotic platform dynamics, enabling critical refinements on hand-held burst imaging

- More accurate camera pose estimates, matches and 3D points in low-SNR scenes

- Automatically interpreting new cameras by jointly learning novel view sythesis, odometry, and a camera model

- A hypernetwork allows training with a wealth of existing cameras and datasets

- A semi-supervised light field network adapts to newly introduced cameras

- This work is a key step to automated integration of emerging camera technologies

- We adapt burst imaging for 3D reconstruction in low light

- Combining burst locally and feature-based methods over broad motions benefits from the strengths of each

- Allows 3D reconstructions where conventional imaging fails

- More accurate camera trajectory estimates, 3D reconstructions, and lower overall computational burden

- Jointly learning to super-resolve and label improves performance at both tasks

- Adversarial training enforces perceptual realism

- A feature loss forces semantic accuracy

- Demonstration on aerial imagery for remote sensing

- We describe the hyperbolic view dependency in Time of Flight Fields

- Our all-in-focus filter improves 3D fidelity and robustness to noise and saturation

- We release a dataset of thirteen 15 x 15 time of flight field images

- Fast classification of visually similar objects using multiplexed illumination

- Using light stage capture and rendering to drive optimization of multiplexing codes

- Outperforms naive and conventional multiplexing patterns in accuracy and speed

- Unsupervised learning of odometry and depth from sparse 4D light fields

- Encoding sparse LFs for consumption by 2D CNNs for odometry and shape estimation

- Toward unsupervised interpretation of general LF cameras and new imaging devices

- A new kind of feature that exists in the patterns of light refracted through objects

- Allows 3D reconstructions where SIFT / LiFF fail

- More accurate camera trajectory estimates, 3D reconstructions in complex refractive scenes

- SIFT-like features for light fields

- Robust to occlusions, noise, and high-order light transport effects

- Each feature has a well-defined depth / light field slope

- 138° 72 Mpix LF panoramas through a single, spherical lens

- Spherical parameterization compatible with planar light fields

- World's first single-lens wide-FOV LF camera

- Generalization of Richardson-Lucy deblurring to moving light field cameras

- 6-DOF camera motion in arbitrary 3D scenes

- Deblurring of nonuniform apparent motion without depth estimation

- Novel parallax-preserving light field regularization

Image-based visual servoing with light field cameras

- Exploits depth information in the light field without explicit depth estimation

- Derivation of light field image Jacobians

- Demonstration on robotic arm using a MirrorCam light field camera

- Outperforms monocular and stereo methods for narrow-FOV and occluded scenes

- D. Tsai, D. G. Dansereau, T. Peynot, and P. Corke, “Image-based visual servoing with light field cameras,” IEEE Robotics and Automation Letters (RA-L), vol. 2, no. 2, Apr. 2017. Available here.

Spinning Omnidirectional Stereo Camera

- Live-Streaming 3D Virtual Reality Video

- Project page at Stanford, Example Videos, SIGGRAPH Asia slides (pdf w/embedded video)

- R. Konrad*, D. G. Dansereau*, A. Masood, and G. Wetzstein, “SpinVR: Towards live-streaming 3D virtual reality video,” ACM Transactions on Graphics (TOG), SIGGRAPH ASIA, vol. 36, no. 6, Nov. 2017. Available here.

Lunaroo

- A hopping lunar explorer for telemetry and mapping

- Project Page at juxi.net

- J. Leitner, W. Chamberlain, D. G. Dansereau, M. Dunbabin, M. Eich, T. Peynot, J. Roberts, R. Russell, and N. Sünderhauf, “LunaRoo: Designing a hopping lunar science payload,” in IEEE Aerospace Conference, 2016. Available here.

- T. Hojnik, R. Lee, D. G. Dansereau, and J. Leitner, “Designing a robotic hopping cube for lunar exploration,” in Australasian Conference on Robotics and Automation (ACRA), 2016. Available here.

Mirrored light field video camera adapter

- 3D printed base + laser-cut acrylic mirrors

- Creates a virtual array of cameras that measures a light field

- Optimization scheme finds best mirror array for a specific application

- D. Tsai, D. G. Dansereau, S. Martin, and P. Corke, “Mirrored light field video camera adapter,” Queensland University of Technology, Dec. 2016. Available here.

- Robot interaction as part of the imaging process

- Visual material analysis: discriminating objects based on how they behave

- Motion magnification for stiff and fragile objects

- Closed-form change detection from moving cameras

- Model-free handling of nonuniform apparent motion from 3D scenes

- Handles failure modes from competing single-camera methods

- Approach generalizes to simplifying other moving-camera problems

Light Field Depth-Velocity Filtering

- A 5D light field + time filter that selects for depth and velocity

- C. U. S. Edussooriya, D. G. Dansereau, L. T. Bruton, and P. Agathoklis, “Five-dimensional (5-D) depth-velocity filtering for enhancing moving objects in light field videos,” IEEE Transactions on Signal Processing (TSP), vol. 63, no. 8, pp. 2151–2163, April 2015. Available here.

- A linear filter that focuses on a volume instead of a plane

- Enhanced imaging in low light and through murky water and particulate

- Derivation of the hypercone / hyperfan as the fundamental shape of the light field in the frequency domain

Exploiting parallax in panoramic capture to construct light fields

- We show that parallax, usually considered a nuisance, can be exploited to build light fields

- We turn an Ocular Robotics camera pointing system into an adaptive light field camera

- We demonstrate light field capture, refocusing and low-light image enhancement

- Best paper award, ACRA 2014

- D. G. Dansereau, D. Wood, S. Montabone, and S. B. Williams, “Exploiting parallax in panoramic capture to construct light fields,” in Australasian Conference on Robotics and Automation (ACRA), 2014. Available here.

Plenoptic flow for closed-form visual odometry

- Generalization of 2D Lucas–Kanade optical flow to 4D light fields

- Links camera motion and apparent motion via first-order light field derivatives

- Solves for 6-degree-of-freedom camera motion in 3D scenes, without explicit depth models

- D. G. Dansereau, I. Mahon, O. Pizarro, and S. B. Williams, “Plenoptic flow: Closed-form visual odometry for light field cameras,” in Intelligent Robots and Systems (IROS), 2011, pp. 4455–4462. Available here.

- see also Ch.5 of D. G. Dansereau, “Plenoptic signal processing for robust vision in field robotics,” PhD thesis, Australian Centre for Field Robotics, School of Aerospace, Mechanical; Mechatronic Engineering, The University of Sydney, 2014. Available here.

Gradient-based depth estimation from light fields

- Depth from local gradients

- Simple ratio of differences, easily parallelized

- D. G. Dansereau and L. T. Bruton, “Gradient-based depth estimation from 4D light fields,” in Intl. Symposium on Circuits and Systems (ISCAS), 2004, vol. 3, pp. 549–552. Available here.